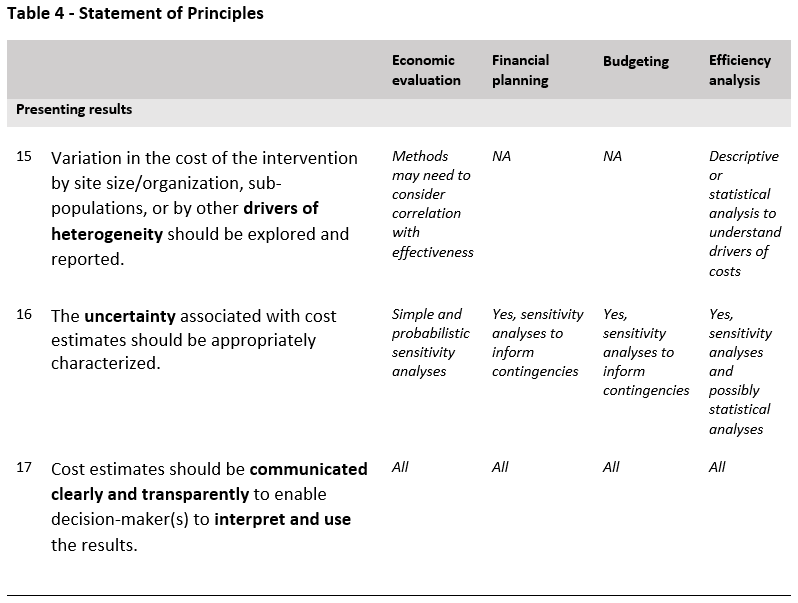

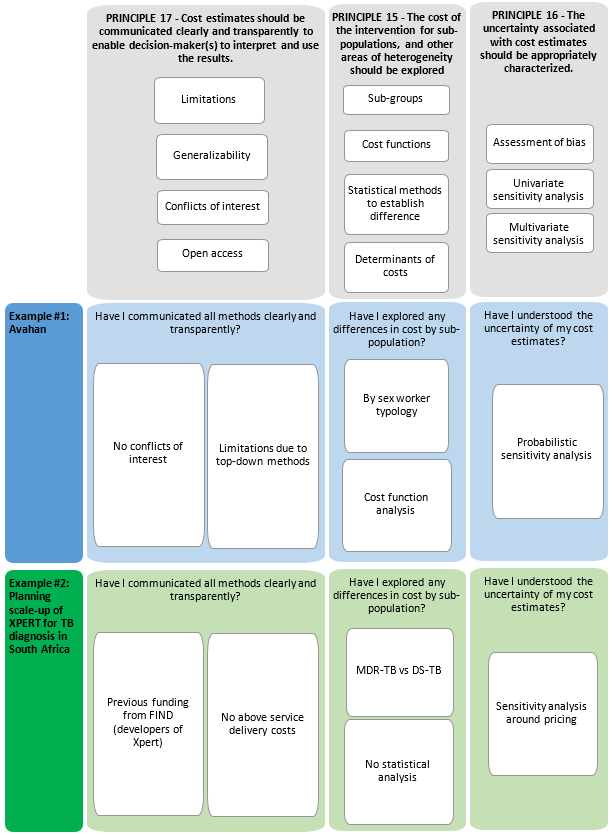

The fourth part of the Reference Case focuses on analyzing and presenting results. Three principles are defined, represented in Table 4 and in the figure at the end of the section.

As the introduction section of the Reference Case explains, unit costs are rarely constant over scale (or other organizational characteristics), and in many cases, may not be similar for different sub-groups of populations. Hence, it is important to explore differences in cost by site and population group. Exploration and reporting of these heterogeneities will also assist in extrapolating costs from the study to other settings and scales of delivery.

With respect to population group, in some cases presenting aggregate unit costs may be highly misleading if others apply the unit costs to populations with different characteristics. For example, applying an average cost of treatment for drug-susceptible (DS) TB and multi-drug-resistant (MDR-TB) patients would only be relevant to settings with approximately the same prevalence of TB and MDR-TB. In this case, the unit cost of treatment for each different patient group would be more useful. In addition, it will be important to consider underlying conditions or co-morbidities that may impact health-care costs for other diseases83.

While it is preferable to examine average cost functions rather than a single ‘unit cost’, the amount of data needed to do this is substantial. In the main, the statistical requirements to estimate functions require relatively large sample sizes (number of facilities or other sample unit >30), which may be beyond the funding availablei. We therefore do not recommend this as a minimum standard. Nevertheless, we recommend the reporting of unit costs by site (e.g., facilities or other units of observation) together with a set of site characteristics (see minimum reporting standard and example tables in Appendices 2 and 4). Mean unit cost estimates can also be disaggregated by other categories that may drive heterogeneity, including service delivery platforms or type of setting (e.g., rural and urban) and quality of care. Heterogeneity should be explored in sub-groups of the population where the differences are likely to have an important influence on costs.

Categorical or sub-group formation should be informed by both the characteristics of different populations and determinants that may influence unit costs such as geographic location. Where feasible the identification of these characteristics can be aided with formal statistical testing of differences. Where differences are found, unit costs should be presented by sub-group and a weighted average constructed for the whole population. It should also be noted that the presentation of unit costs by sub-groups may also be desirable from a programmatic perspective (e.g., where programs may be interested in a stigmatized or high-risk population).

Where sample sizes are larger, econometric approaches to characterizing cost functions can be used. It is beyond the scope of the Reference Case to provide guidance on these methods at this time.

Since many global health-costing studies have a handful of sites (or other unit of observation), there is often no formal method used to characterize the precision of the estimate. Even measures of dispersion are rarely presented. However, there is likely to be considerable uncertainty in any cost estimate due to both bias and lack of precision. It may be misleading if the uncertainty is not fully characterized, and the user is not made aware of the possible space between the estimate and reality. Further, exploring the implications/sensitivity of the cost to any assumptions or exclusions made can enhance generalizability of results.

The uncertainty of any cost estimate should be fully characterized. For studies with multiple sites (or other units of observation), this should at a minimum include an assessment of precision (e.g., confidence intervals, or percentiles). Care should be taken to examine whether the observations are normally distributed, and where they are not, to use the appropriate statistical techniques. In addition, where relevant, basic or more complex sensitivity analyses should be applied in standard ways (see economic evaluation textbooks).

It is particularly important to characterize the bias in the estimate by referring to:

- Sampling that may reflect higher- or lower-cost sites or populations disproportionately

- Completeness – what elements of costs are missing (inputs, service use, providers)

- Possible under- or over-reporting of elements such as service and time use due to the data collection methods or program features

- Distortions or incompleteness in the prices of inputs.

While it may not always be feasible to quantify bias, the characteristics and direction of any bias should be reported in the study limitations.

Finally, any discussion section should include recommendations in terms of the generalizability of estimates to other settings and scales. For example, it may be important to highlight how service delivery may differ between the studied program (often a demonstration or pilot) and scaled-up operation (which may achieve efficiencies in staffing or different input prices).

Cost estimates may be used for multiple purposes, for policy development and broader economic analysis. The characteristics of a ‘good estimate’ will vary depending on its purpose. If a cost estimate is used for the wrong purpose, or if its limitations are not described, it can be misleading. Moreover, the most methodologically robust costing will not be informative if the methods and results are not reported clearly.

Importantly, for a cost estimate to be transferable over setting and time, analysts and users require transparency about its components, any assumptions made, its uncertainty and its limitations. Specifically, it needs to be clear how an intervention cost is constructed from its components, commonly: data on service use, the unit costs of that use, and the quantity and prices of inputs that determine that unit cost. This will allow analysts in other settings to adjust for differences in prices or other factors that affect the cost of delivery84. This clarity is also required to meet the minimum academic standard of replicability.

To facilitate the transfer of costs across setting or time, a clear description of setting is also important. For example, economies of scope and scale often affect cost85, so understanding the coverage and integration will assist others in applying the cost estimate elsewhere. In addition, providing breakdowns of cost by activity may assist those adapting the intervention to their setting in identifying where they may have some activities already in place, or help in the financial planning of scale-up.

Finally, given the levels of public investment in these data, there are increasing requests for the full dataset to be provided using open access facilities, and it is good research practice to declare conflicts of interest.

The Reference Case details ‘Minimum Reporting Standards’ in the next section, which outline the aspects that need to be reported to ensure minimal compliance with the transparency principle. These reporting standards reflect the method specifications provided above and state that the purpose of the costing should be fully and accurately described, that the choice of costing to address the purpose should be justified, and that the intervention and context should be clearly characterized. The limitations of any method and their likely effect on a specific estimate should be fully transparent and, as with any scientific report, declarations of conflicts of interest should be made.

The transparency principle applies both to the reporting of costs, and to the intervention or the site characteristics (or other units of observation) to enable others to interpret whether the costs would be relevant for their setting.

Where total costs are reported, both the number of units and the unit cost should also be reported.

Where intervention unit costs per person are composed of unit costs for services (e.g., visit costs) multiplied by service use (e.g., number of visits), these ‘P’s (‘prices’) and ‘Q’s (‘quantities’) should be reported. If feasible, Ps and Qs should also be reported for inputs (e.g., staff numbers and wages). However, in some cases where only expenditures are known, this may not be possible.

Even if other units are used, reporting should at a minimum be done using standardized unit costs where available. This Reference Case includes examples of standardized reporting formats for TB and HIV services that include a list of units for standardized unit costs.

Where relevant cost data are reported, disaggregation should be provided by site (or measures of dispersion presented) and by input and activity.

These should be considered minimum reporting standards to ensure minimal compliance with the transparency principle. Minimum Reporting Standards do not impose any additional methodological burden on researchers as they draw on information and data that must normally be considered in estimating costs.

Finally, it is strongly recommended that analysts feed the results back to the sites and organizations from whom data has been collected. This can create buy-in and provides an additional process of validation to any results.